In this paper, we discuss the surprising result that non-change detectors can outperform change-detectors when used in a classification streaming evaluation. This may be due to the temporal dependence on data, and we argue that evaluation of change detectors should not be done using only classifiers. We wish that this paper will open several directions for future research.

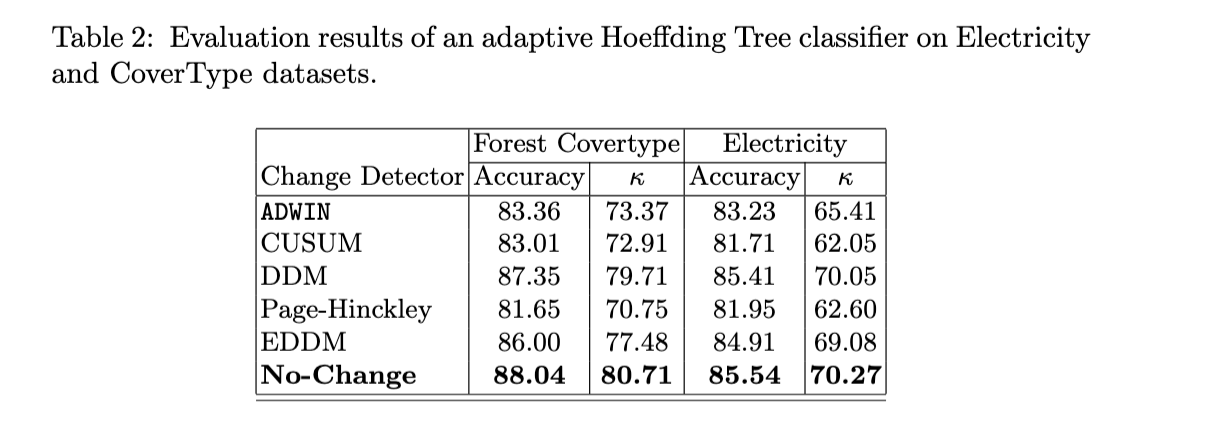

In an experiment with an adaptive HT with the electricity and covertype datasets, the best performance was due to the No-Change Detector. This detector outputs change every 60 instances; it is a no-change detector in the sense that it is not detecting change in the stream. Surprisingly, the classifiers using this no-change detector are getting better results than using the standard change detectors.

Albert Bifet: Classifier Concept Drift Detection and the Illusion of Progress. ICAISC (2) 2017: 715-725